|

12/2/2023 0 Comments Cuda toolkit docker

CRI-O is light-weight container runtime that was designed to take advantage of Kubernetes’s Container Runtime Interface (CRI. (The Nvidia drivers can be installed as part of installing CUDA, but the rest of the CUDA toolkit is not required.) Hardware. Read this blog post for detailed instructions on how to install, setup and run GPU applications using LXC. NOTE: The nvidia-docker2 package that is generated by this repository is a meta package that only serves to introduce a dependency on. Sudo apt install -no-install-recommends \Ģ - You will need to remove old docker and install nvidia-docker2 and nvidia-container-runtime, here you can get how: # If you have nvidia-docker 1.0 installed: we need to remove it and all existing GPU containersĭocker volume ls -q -f driver=nvidia-docker | xargs -r -I-ubuntu16. Running a cuda container from docker hub using LXC: lxc-create -t oci cuda -u docker://nvidia/cuda.

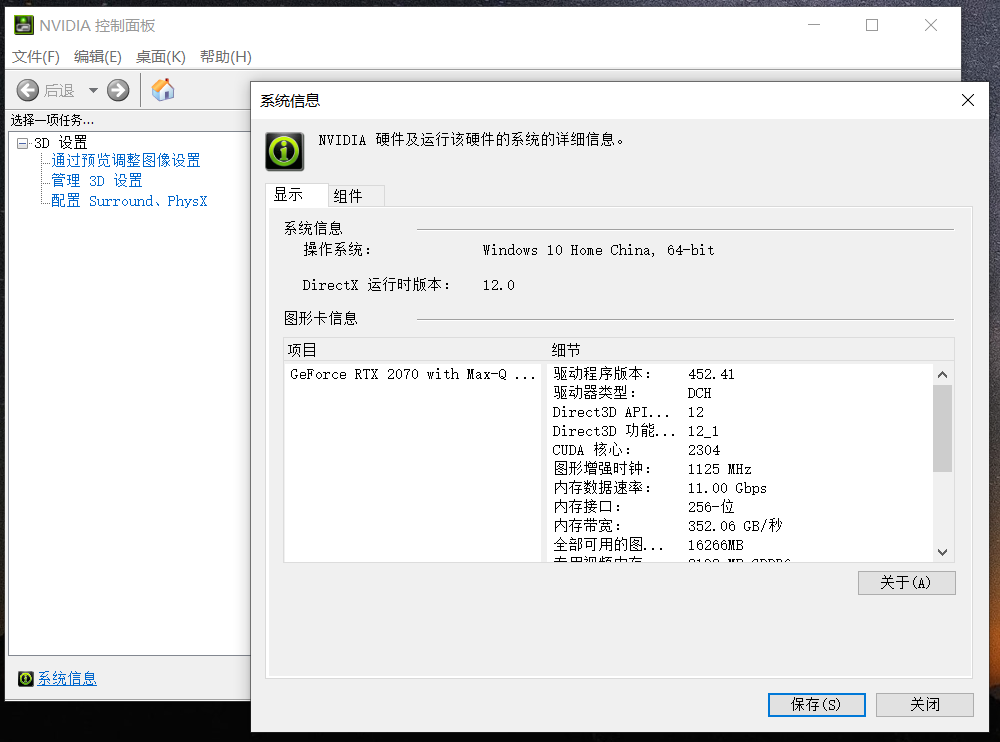

e.g: If you have 4 GPUs, to isolate GPUs 3 and 4 (/dev/nvidia2 and /dev/nvidia3) docker run -gpus device2,3 nvidia/cuda:9.0-base nvidia-smi. See the user guide for more information on these options. Devices can be referenced by index (following the PCI bus order) or by UUID. Note: If you already know this part 1 you can jump to part 2:ġ- Install the drivers: sudo add-apt-repository ppa:graphics-drivers/ppa -y GPU isolation is achieved through the CLI option -gpus. For a package manager CUDA install method, the method to install the compatibility libraries is simple (example on Ubuntu, installing the CUDA 10.1 compatibility to match CUDA 10.1 toolkit install): sudo apt-get install cuda-compat-10.1 Make sure to match the version to the CUDA toolkit version that you are using (that you installed with the. If you are in Linux, you just have to install the GPU drivers on your machine, don’t need the entire CUDA toolkit: The CUDA Toolkit End User License Agreement applies to the NVIDIA CUDA Toolkit, the NVIDIA CUDA Samples, the NVIDIA Display Driver, NVIDIA Nsight tools (Visual Studio Edition), and the associated documentation on CUDA APIs, programming model and development tools. I believe you already know this, but just to recap: How to setup a Docker image ready for RTX GPU and Fastai 2018 with Pytorch 1.0 nightly Part 1 - setting up the nvidia-drivers, nvidia-docker2, nvidia-container-runtime

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed